Machine learning & AI

Our research projects fuse modern methods from machine learning and AI such as deep learning and Gaussian processes, with more traditional methods from statistics.

Staff

Postgraduate research students

Refine By

-

{{student.surname}} {{student.forename}}

{{student.surname}} {{student.forename}}

({{student.subject}})

{{student.title}}

Machine Learning and AI - Example Research Projects

Information about postgraduate research opportunities and how to apply can be found on the Postgraduate Research Study page. Below is a selection of projects that could be undertaken with our group.

Structured ML for physical systems (PhD or MSc)

Supervisors: Lawrence Bull

Relevant research groups: Machine Learning and AI, Emulation and Uncertainty Quantification

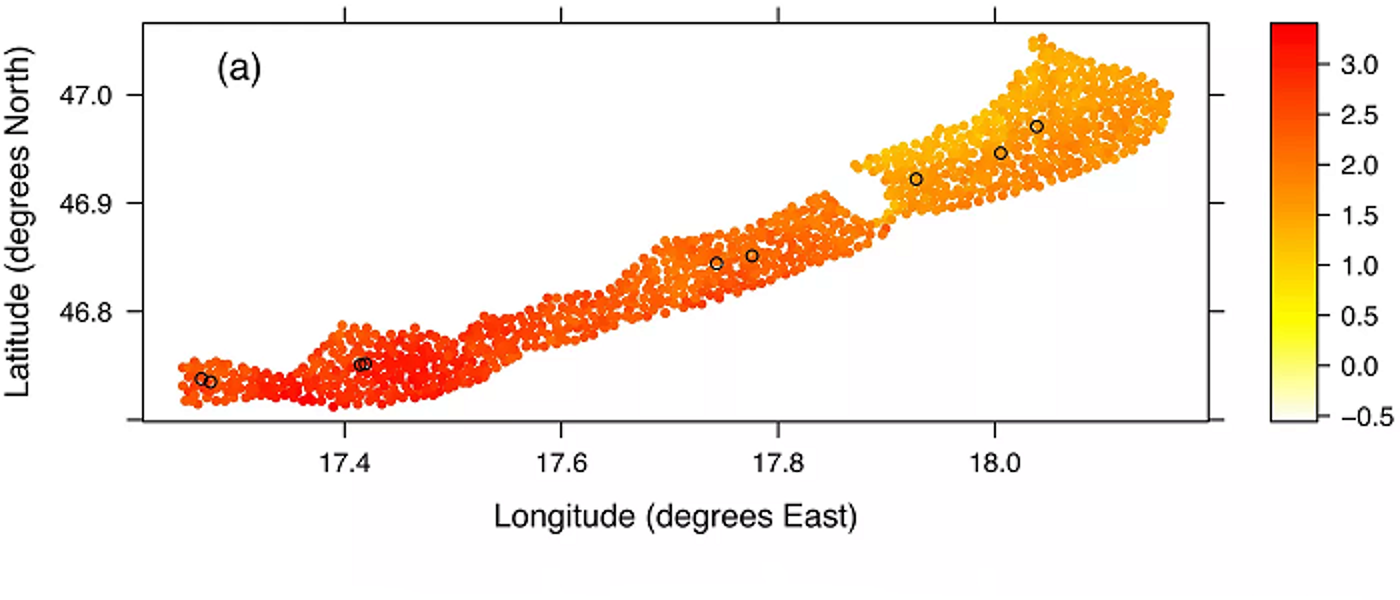

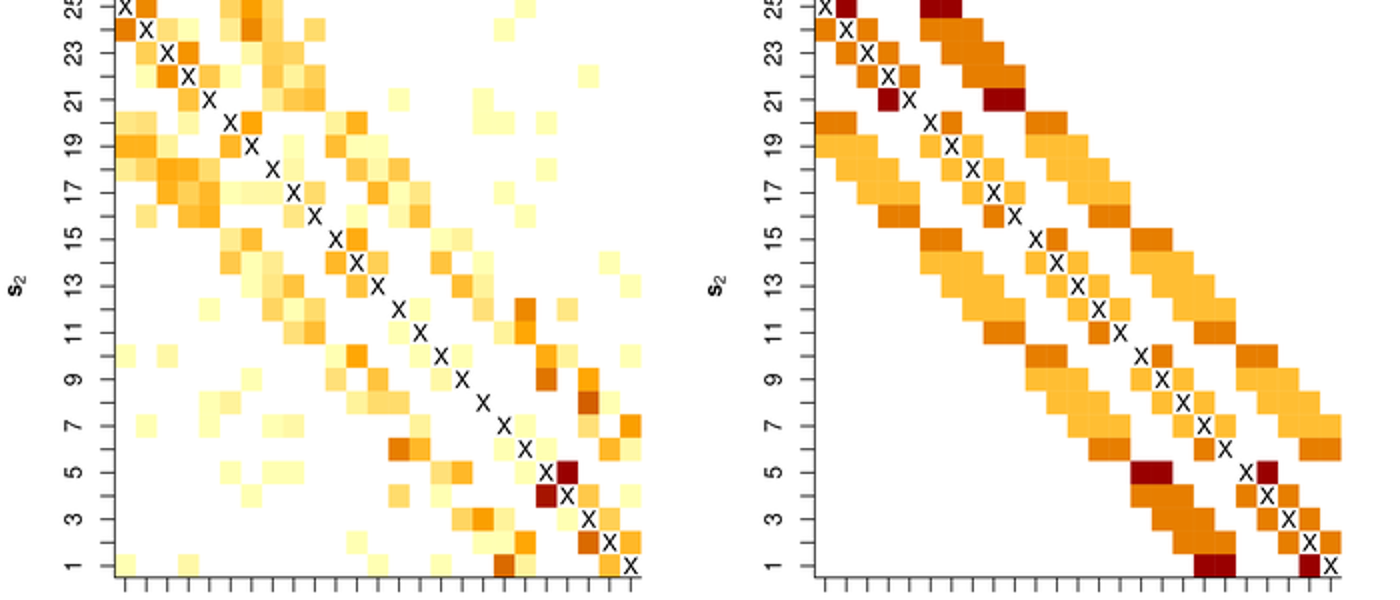

When using Machine Learning (ML) for science and engineering, an alternative mindset is required to build sensible representations from data. Unlike other applications (e.g. large language models), the datasets are relatively small and curated - i.e. they are collected via experiments rather than scraped from the internet. The limited variance of training data typically renders learning by ‘brute force’ infeasible. Instead, we must encode domain-specific knowledge within ML algorithms to enforce structure and constrain the space of possible models.

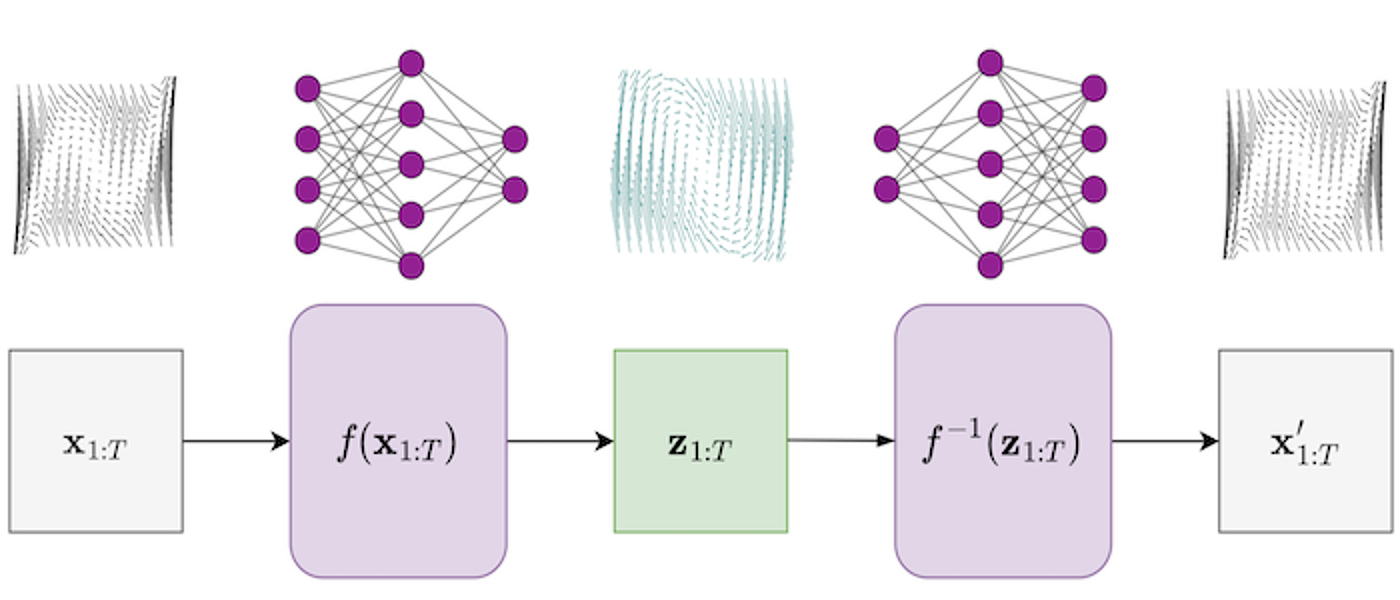

This project covers ML for physical systems (Karniadakis, 2021) and looks to integrate machine learning with applied mathematics - fusing scientific knowledge with insights from data. Methods will investigate various levels of constraints on ML predictions - including smoothness, invariances, etc. Relevant topics include:

- physics-informed machine learning (Girin, 2020)

- scientific machine learning (Pförtner, 2022)

- hybrid modelling

Medical image segmentation and uncertainty quantification (PhD)

Supervisors: Surajit Ray

Relevant research groups: Machine Learning and AI, Imaging, Image Processing and Image Analysis

This project focuses on the application of medical imaging and uncertainty quantification for the detection of tumours. The project aims to provide clinicians with accurate, non-invasive methods for detecting and classifying the presence of malignant and benign tumours. It seeks to combine advanced medical imaging technologies such as ultrasound, computed tomography (CT) and magnetic resonance imaging (MRI) with the latest artificial intelligence algorithms. These methods will automate the detection process and may be used for determining malignancy with a high degree of accuracy. Uncertainty quantification (UQ) techniques will help generate a more precise prediction for tumour malignancy by providing a characterisation of the degree of uncertainty associated with the diagnosis. The combination of medical imaging and UQ will significantly decrease the requirement for performing invasive medical procedures such as biopsies. This will improve the accuracy of the tumour detection process and reduce the duration of diagnosis. The project will also benefit from the development of novel image processing algorithms (e.g. deep learning) and machine learning models. These algorithms and models will help improve the accuracy of the tumour detection process and assist clinicians in making the best treatment decisions.

Seminars

Regular seminars relevant to the group are held as part of the Statistics seminar series. The seminars cover various aspects across the AI3 initiative and usually span multiple groups. You can find more information on the Statistics seminar series page, where you can also subscribe to the seminar series calendar.

Recent innovations around generative AI models such as chatGPT have brought the fields of machine learning and AI to the forefront of modern research. Many of these methods are based on core statistical principles and methodology, and therefore there is a large interface between machine learning, AI, and statistics.

The Machine Learning and AI group works on several methods on this interface, with ongoing research projects using Generative Adversarial Networks (GANs), graph neural networks, and more traditional machine learning methods such as Gaussian Processes.