Analysing Cognitive Health via Speech Interactions with an Augmented Reality Zoomorphic Robot

Supervisor: Dr Shaun Macdonald

School: Computing Science

Partner: Kinabot

Description:

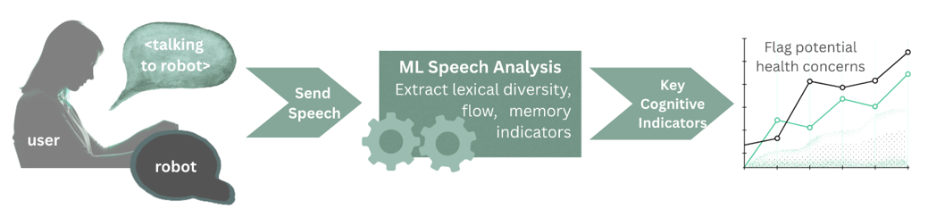

The need to support healthy ageing and early identification of cognitive decline is an urgent societal challenge. Speech features, such as fluency, word searching, cadence and acoustic markers, can act as sensitive indicators of cognitive health. However, speech-based assessment is typically conducted through medicalised testing that can interrupt daily life and undermine user comfort. At the same time, social companion robots are already deployed in care homes and assisted living settings to provide emotional support and reduce loneliness. This project explores whether speech indicators can be captured passively during natural interaction with the social robot PARO, situating cognitive health monitoring within an existing, trusted and supportive robot.

Despite the promise of situating speech-based digital biomarkers into these robot interactions, it remains unclear whether spontaneous speech directed at a zoomorphic robot is sufficient in quantity and quality for meaningful analysis. Furthermore, integrating advanced speech detection into deployed robotic systems presents practical and ethical challenges. This project will address these gaps by integrating a speech analysis engine, developed in collaboration with KinaBot, into our state-of-the-art Augmented Reality AZRA robot prototyping framework.

This will be the first study to integrate passive speech-based cognitive health markers into a zoomorphic therapeutic robot and evaluate their feasibility. AR allows new sensing and feedback capabilities to be scaffolded onto existing robots without physical modification, enabling rapid, ecologically valid evaluation. Working with KinaBot also provides access to an industry-grade speech analytics pipeline and provides a clear pathway to real-world translation and impact.

The student will adapt our AR framework for use with PARO and implement the speech analysis pipeline in coordination with KinaBot, then design and conduct a user study comparing interaction scenarios (e.g., free conversation, prompted interaction, tasks) to evaluate how much speech occurs and if it supports feature extraction.

Project Timeline:

- Weeks 1–2:Review literature on speech-based cognitive health indicators and existing socially assistive robot deployments.

- Weeks 3–4:IntegrateKinaBot’s speech analysis engine into the AZRA AR framework and adapt the system for PARO.

- Weeks 5–7:Design and conduct a user study examining speech quantity and quality across interaction conditions.

- Weeks 8–10:Analyse study data to understandif speech interactions with robots correlate with effective detection of cognitive indicators, then contribute findings to an academic manuscript.

This study will evaluate the feasibility of embedding passive cognitive health monitoring within socially assistive robotics, informing future technology that supports dignity, agency and preventative care.