Self-driving cars may need to adapt to share roads safely with runners, study reveals

Published: 31 March 2026

A new study on how runners may choose to interact with self-driving cars is challenging assumptions on how automated vehicles will navigate safely on the roads of the future.

A new study on how runners may choose to interact with self-driving cars is challenging assumptions on how automated vehicles will navigate safely on the roads of the future.

Researchers at the University of Glasgow and KAIST in South Korea led the study, which used augmented reality tech to explore for the first time how runners’ behaviour differs from walkers when they are crossing roads and junctions.

https://youtu.be/0U0zaLp--1w

Their research revealed that runners are much more likely to take risks when negotiating traffic than walkers are. During the study, the runners often took less time to process the road conditions around them and sometimes chose to run ahead of oncoming cars in order to maintain their target pace. On several occasions, they were ‘struck’ by virtual vehicles in the team’s simulated road tests.

The team’s findings could help develop better safety systems for automated vehicles, or AVs, which have largely been trained to expect the people around them to behave much more cautiously while walking.

The team suggest that displays of lights on the exteriors of cars called ‘external Human-Machine Interfaces’, or eHMIs, could enable them to communicate their intentions more quickly and effectively.

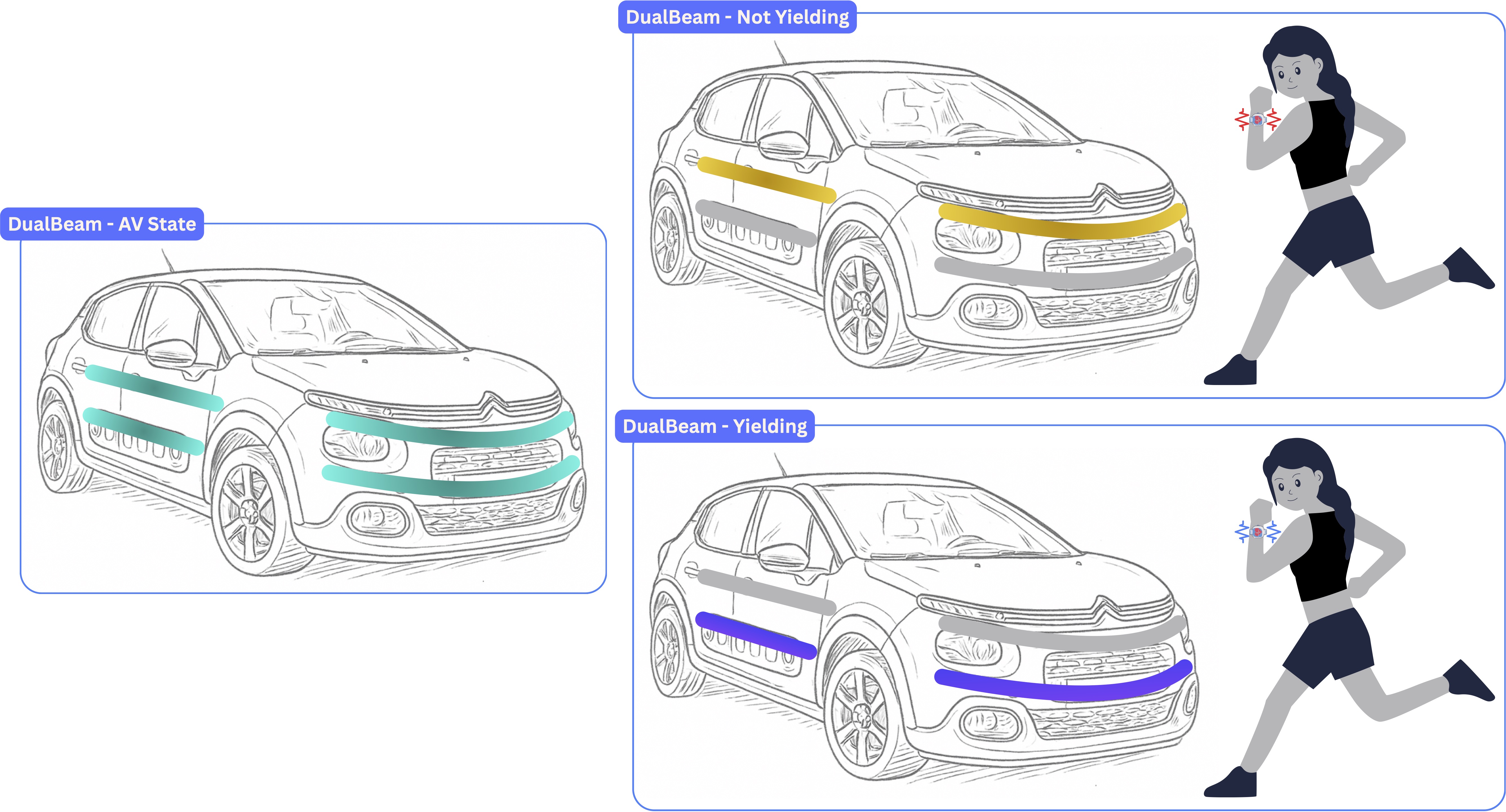

Well-designed eHMIs could replace the usual non-verbal cues like waves, eye movements, and deceleration which human drivers currently use to show people around them how they intend to proceed. Based on their research, the team suggest an eHMI design called DualBeam, which uses new types of lights on the vehicle to help runners make swift but well-informed crossing decisions.

Professor Stephen Brewster, of the University of Glasgow’s School of Computing Science said: “In our research group, we’ve been working for several years now on developing eHMIs which could help self-driving cars share the roads safely with vulnerable road users such as cyclists. In doing so, we realised that there’s been very little research into how runners might expect to interact with driverless cars.

“That’s surprising on a couple of levels. One is that running is the most popular physical activity in the world, with more than 600 million people estimated to run recreationally. The other is that self-driving car journeys are booming, with a million AV trips a month in the USA alone. Clearly, it will be increasingly important to ensure that runners and AVs can share the roads safely in the years to come. We were keen to explore how self-driving cars could use eHMIs to speak the language of runners as well as bike riders to help maximise road safety.”

In order to test how walkers and runners might interact and communicate with self-driving cars, the team used an augmented-reality headset to mix real-world conditions with a life-size simulated AV. The virtual environment enabled them to safely test how self-driving cars might behave around runners and walkers in the future.

The study’s 24 participants went outdoors wearing the headset, which showed them a virtual urban environment overlaid on the real world. The participants either walked or ran towards a junction with a simulated AV approaching.

The simulated car had either no safety features displayed on its exterior, or one of two eHMIs. One eHMI, a ring of lights around the car the team called a LightRing, showed green to indicate it was safe for the participant to continue or red to help them decide to stop. The other, a strip of animated cyan lights called CyanBand, swept the lights inward to show it is slowing and in the opposite direction to display acceleration. Sometimes the car stopped for the participants, and sometimes it did not.

Although both runners and walkers reported that they found it easier to determine the car’s intentions if it used an eHMI, the differences between their behaviour in all cases was striking

When walking, participants had time to slow down or stop and verify the eHMI signals with the vehicle’s driving or braking behaviour. This increased their trust in the AV’s intentions and allowed them to make safe and informed crossing decisions.

In contrast, when running, participants felt more motivated to cross to maintain exercise. They were unlikely to slow down or stop when approaching the crossing. This gave them less time to make informed decisions, resulting in an overreliance on the display, riskier crossing decisions, and a lack of trust on the AV’s intentions.

The runners struggled to process the animation of the CyanBand lights in the limited time they had to focus on them while approaching the junction, while walkers found it useful. The LightRing display’s simple red-and-green colour scheme, on the other hand, was much more immediately legible to both groups.

Runners also collided with the vehicle three times during the study, while walkers avoided any contact with the cars. In two of the collisions, the runners saw a red light but chose to run ahead anyway, misjudging the vehicle’s pace and causing a simulated collision.

Ammar Al-Taie is one of the paper’s corresponding authors. He worked on the research at the University of Glasgow before moving to KAIST in South Korea, where is now based.

He said: “I’m a runner myself, and I’ve noticed that crossing roads while running feels different than when I’m walking. I’m much more motivated to keep moving because slowing to let a car pass and accelerating again takes a real physical effort. Paired with that is an increased mental effort of trying to process what’s around me as I’m running, and judging whether it’s safe for me to keep going at my current pace.

“In this study, we found evidence to suggest these are common feelings for runners, and that they do seem more tolerant of risk if it helps them keep moving. That makes them a riskier class of road user for self-driving cars to deal with, and suggests that more needs to be done to test self-driving cars with runners as well as walkers, and to find new ways to facilitate communication between cars and people.”

In the paper, the team propose adapting their findings into a new eHMI called DualBeam, which places two rows of lights around each side of the car with colours chosen to be quickly understandable but avoiding the over-familiarity of red and green. Instead, an amber ring would signify the car does not intend to yield, while a purple one would show that the car intends to let the runner pass safely.

The team also propose that DualBeam could integrate an early-warning system which would send vibration or sound alerts to runners’ smart watches or earbuds to highlight an approaching AV, enabling them to better assess risk without breaking stride.

The team’s paper, titled Running into Traffic: Investigating External Human-Machine Interfaces for Automated Vehicle-Runner Interaction’, will be presented at the CHI 2026 conference in Barcelona on Friday April 17.

First published: 31 March 2026